Sponsored Content

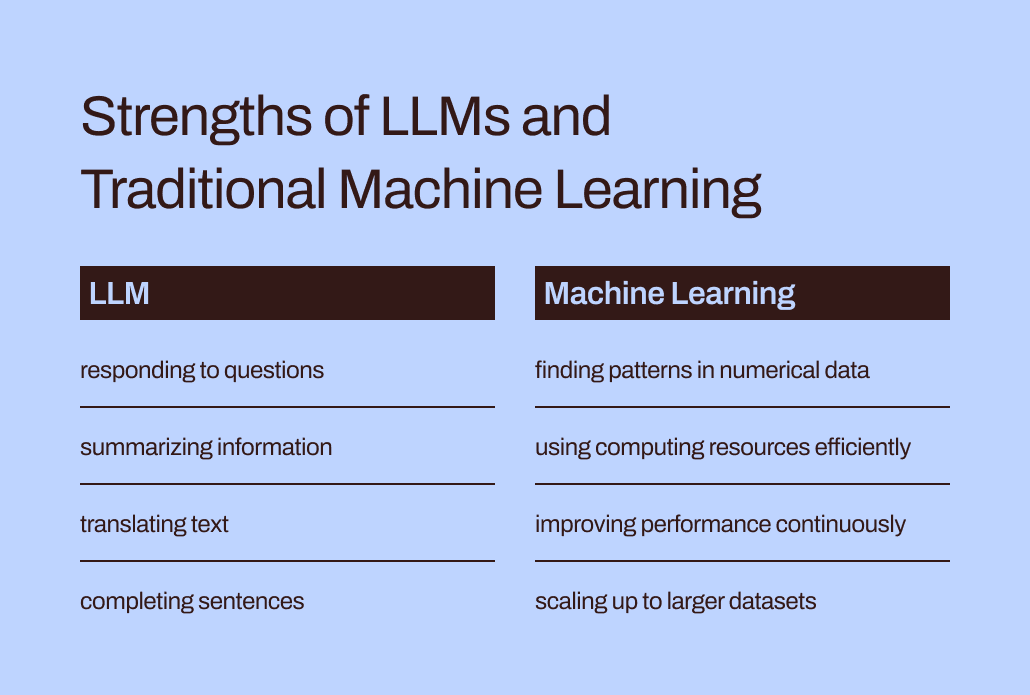

ChatGPT and similar tools based on large language models (LLMs) are amazing. But they aren’t all-purpose tools.

It’s just like choosing other tools for building and creating. You need to pick the right one for the job. You wouldn’t try to tighten a bolt with a hammer or flip a hamburger patty with a whisk. The process would be awkward, resulting in a messy failure.

Language models like LLMs constitute only a part of the broader machine learning toolkit, encompassing both generative AI and predictive AI. Selecting the correct type of machine learning model is crucial to align with the requirements of your task.

Let’s dig deeper into why LLMs are a better fit for helping you draft text or brainstorm gift ideas than for tackling your business’s most critical predictive modeling tasks. There’s still a vital role for the “traditional” machine learning models that preceded LLMs and have repeatedly proven their worth in businesses. We’ll also explore a pioneering approach for using these tools together — an exciting development we at Pecan call Predictive GenAI.

LLMs are designed for words, not numbers

In machine learning, different mathematical methods are used to analyze what is known as “training data” — an initial dataset representing the problem that a data analyst or data scientist hopes to solve.

The significance of training data can’t be overstated. It holds within it the patterns and relationships that a machine learning model will “learn” to predict outcomes when it’s later given new, unseen data.

So, what specifically is an LLM? Large Language Models, or LLMs, fall under the umbrella of machine learning. They originate from deep learning, and their structure is specifically developed for natural language processing.

You might say they’re built on a foundation of words. Their goal is simply to predict which word will be the next in a sequence of words. For example, iPhones’ autocorrect feature in iOS 17 now uses an LLM to better predict which word you will most likely intend to type next.

Now, imagine you’re a machine learning model. (Bear with us, we know it’s a stretch.) You’ve been trained to predict words. You’ve read and studied millions of words from a vast range of sources on all kinds of topics. Your mentors (aka developers) have helped you learn the best ways to predict words and create new text that fits a user’s request.

But here’s a twist. A user now gives you a massive spreadsheet of customer and transaction data, with millions of rows of numbers, and asks you to predict numbers related to this existing data.

How do you think your predictions would turn out? First, you’d probably be annoyed that this task doesn’t match what you worked so hard to learn. (Fortunately, as far as we know, LLMs don’t yet have feelings.) More importantly, you’re being asked to do a task that doesn’t match what you’ve learned to do. And you probably won’t perform so well.

The gap between training and task helps explain why LLMs aren’t well-suited for predictive tasks involving numerical, tabular data — the primary data format most businesses collect. Instead, a machine learning model specifically crafted and fine-tuned for handling this type of data is more effective. It’s literally been trained for this.

LLMs’ efficiency and optimization challenges

In addition to being a better match for numerical data, traditional machine learning methods are far more efficient and easier to optimize for better performance than LLMs.

Let’s go back to your experience impersonating an LLM. Reading all those words and studying their style and sequence sounds like a ton of work, right? It would take a lot of effort to internalize all that information.

Similarly, LLMs’ complex training can result in models with billions of parameters. That complexity allows these models to understand and respond to the tricky nuances of human language. However, heavy-duty training comes with heavy-duty computational demands when LLMs generate responses. Numerically oriented “traditional” machine learning algorithms, like decision trees or neural networks, will likely need far fewer computing resources. And this isn’t a case of “bigger is better.” Even if LLMs could handle numerical data, this difference would mean that traditional machine learning methods would still be faster, more efficient, more environmentally sustainable, and more cost-effective.

Additionally, have you ever asked ChatGPT how it knew to provide a particular response? Its answer will likely be a bit vague:

I generate responses based on a mixture of licensed data, data created by human trainers, and publicly available data. My training also involved large-scale datasets obtained from a variety of sources, including books, websites, and other texts, to develop a wide-ranging understanding of human language. The training process involves running computations on thousands of GPUs over weeks or months, but exact details and timescales are proprietary to OpenAI.

How much of the “knowledge” reflected in that response came from the human trainers vs. the public data vs. books? Even ChatGPT itself isn’t sure: “The relative proportions of these sources are unknown, and I don’t have detailed visibility into which specific documents were part of my training set.”

It’s a bit unnerving to have ChatGPT provide such confident answers to your questions but not be able to trace its responses to specific sources. LLMs’ limited interpretability and explainability also pose challenges in optimizing them for particular business needs. It can be hard to understand the rationale behind their information or predictions. To further complicate things, certain businesses contend with regulatory demands that mean they must be able to explain the factors influencing a model’s predictions. All in all, these challenges show that traditional machine learning models — generally more interpretable and explainable — are likely better suited for business use cases.

The right place for LLMs in businesses’ predictive toolkit

So, should we just leave LLMs to their word-related tasks and forget about them for predictive use cases? It might now seem like they can’t assist with predicting customer churn or customer lifetime value after all.

Here’s the thing: While saying “traditional machine learning models” makes those techniques sound widely understood and easy to use, we know from our experience at Pecan that businesses are still largely struggling to adopt even these more familiar forms of AI.

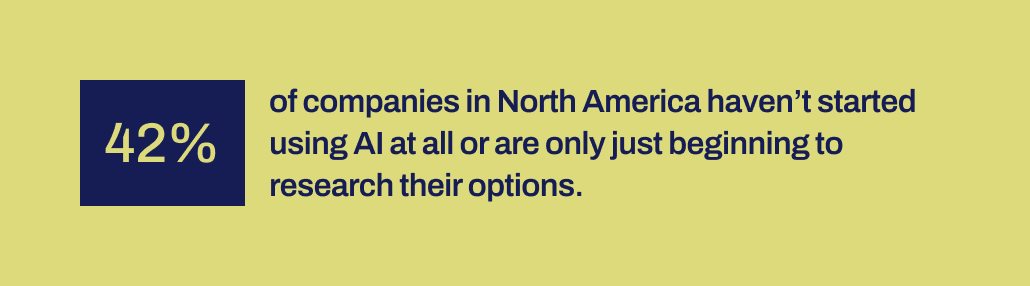

Recent research by Workday reveals that 42% of companies in North America either haven’t initiated the use of AI or are just in the early stages of exploring their options. And it’s been over a decade since machine learning tools became more accessible to companies. They’ve had the time, and various tools are available.

For some reason, successful AI implementations have been surprisingly rare despite the massive buzz around data science and AI — and their acknowledged potential for significant business impact. Some important mechanism is missing to help bridge the gap between the promises made by AI and the ability to implement it productively.

And that’s precisely where we believe LLMs can now play a vital bridging role. LLMs can help business users cross the chasm between identifying a business problem to solve and developing a predictive model.

With LLMs now in the picture, business and data teams that don’t have the capability or capacity to hand-code machine learning models can now better translate their needs into models. They can “use their words,” as parents like to say, to kickstart the modeling process.

Fusing LLMs with machine learning techniques built to excel on business data

That capability has now arrived in Pecan’s Predictive GenAI, which is fusing the strengths of LLMs with our already highly refined and automated machine learning platform. Our LLM-powered Predictive Chat gathers input from a business user to guide the definition and development of a predictive question — the specific problem the user wants to solve with a model.

Then, using GenAI, our platform generates a Predictive Notebook to make the next step toward modeling even easier. Again, drawing on LLM capabilities, the notebook contains pre-filled SQL queries to select the training data for the predictive model. Pecan’s automated data preparation, feature engineering, model building, and deployment capabilities can carry out the rest of the process in record time, faster than any other predictive modeling solution.

In short, Pecan’s Predictive GenAI uses the unparalleled language skills of LLMs to make our best-in-class predictive modeling platform far more accessible and friendly for business users. We’re excited to see how this approach will help many more companies succeed with AI.

So, while LLMs alone aren’t well suited to handle all your predictive needs, they can play a powerful role in moving your AI projects forward. By interpreting your use case and giving you a head start with automatically generated SQL code, Pecan’s Predictive GenAI is leading the way in uniting these technologies. You can check it out now with a free trial.